There are many approaches to sequential testing, several of which are well explained and compared in this Spotify blogpost.įor GrowthBook, we selected a method that would work for the wide variety of experimenters that we serve, while also providing experimenters with a way to tune the approach for their setting.

Sequential testing provides estimators for the second option it allows peeking at your results without fear of inflating the false positive rate beyond your specified a l p h a alpha a lp ha. Use an estimator that returns our control over false positive rates.This is possible, but in a lot of cases it can make decision making worse! It is powerful to be able to see bad results and shut a feature down early or conversely to see a great feature do well right away, end the experiment, and ship to everyone.

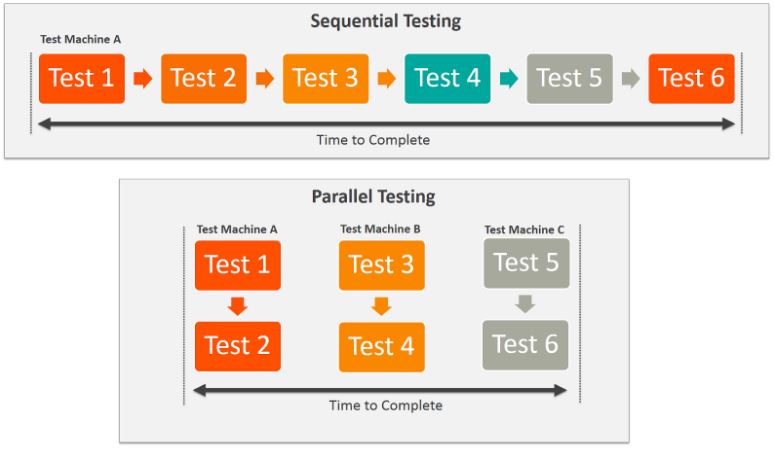

In other words, if we check an experiment as it runs, we are essentially increasing the number of times we can get a positive, even if there is no experiment effect, just through random noise. If you violate this assumption, and peek at your results, you will end up with an inflated False Positive Rate, far above your nominal 5% level. However, experimenters often violate a fundamental assumption underpinning frequentist analysis: that one must wait until for some pre-specified time (or sample size) before looking at and acting upon experiment results. In other words, α \alpha α controls the False Positive Rate. Usually in online experimentation, our null hypothesis is that metric averages in two variations are the same. These all mean the same thing: for a correctly specified frequentist analysis, you will only reject the null hypothesis 5% of the time when the null hypothesis is true. Many people, and GrowthBook, default to using α = 5 % \alpha = 5\% α = 5% (sometimes it is written as α = 0.05 \alpha = 0.05 α = 0.05). When running a frequentist analysis, the experimenter sets a confidence level, often written as α \alpha α. What is peeking? First, some background on frequentist testing. With sequential testing, decisions can be made as soon as significance is reached, without fear of inflating the false positive rate. Although sequential testing produces wider confidence intervals than fixed-sample testing, traditional frequentist inference requires an experimenter to wait until a pre-determined sample size is collected. Sequential testing not only reduces the risk of false positives in online experimentation it can also increase the velocity of experimentation. In other words, it is the frequentist solution to the peeking problem in AB testing. Sequential testing allows you to look at your experiment results as many times as you like while still keeping the number of false positives below the expected rate. Sequential Testing is only implemented for the Frequentist statistics engine, and is a premium feature.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed